Delivering the Pumpkin Before Halloween: Why Lead Time for Changes Dictates Engineering Value

Lead time for changes measures the clock from commit to production. It exposes hidden bottlenecks like manual QA and stagnant PRs. By tracking this DORA metric, teams are forced to automate pipelines and remove approval meetings, ensuring features reach customers while the market need still exists.

A pumpkin delivered on November 1st is just organic waste. It does not matter how perfectly shaped or orange it is; the window of utility has closed. In software, we ship 'November pumpkins' every time a feature sits in a pipeline for weeks while a customer's problem grows or the market shifts. Lead time for changes—the second DORA metric—is the diagnostic tool that stops this decay. It tracks exactly how long code spends in a 'committed' state before it actually creates value in production.

What is lead time for changes and why should I track it?

Lead time for changes is the total duration from a code commit to that code running in a production environment. It is the definitive measurement of your delivery efficiency, separating teams that ship in hours from those that languish for weeks.

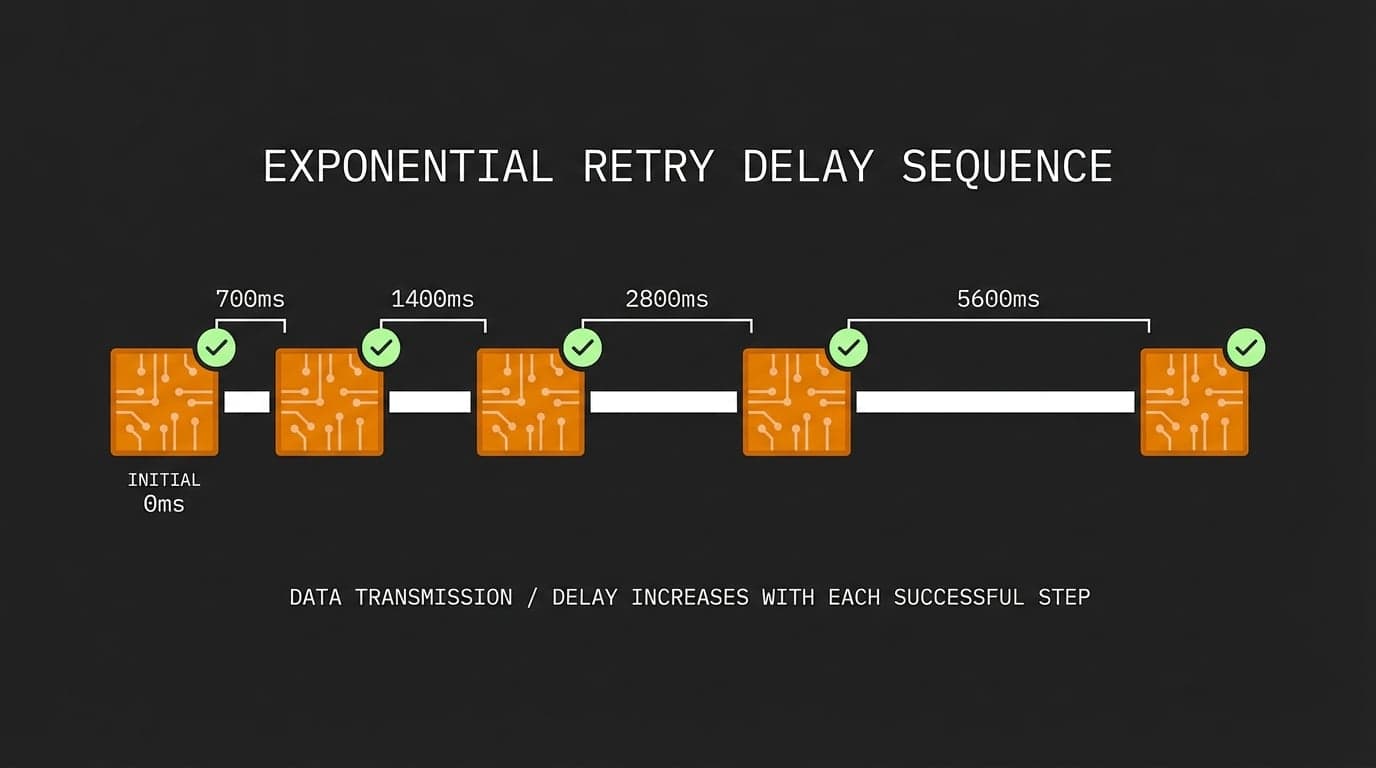

If you are taking weeks to move code from a developer's machine to a live server, your process is broken. Elite teams aim for a lead time of under one hour. Tracking this does not just give you a number; it gives you a map of your technical debt and process friction. When you see a commit take ten days to reach a user, you are forced to ask where it spent those nine days of idle time.

Where does code usually get stuck in the delivery pipeline?

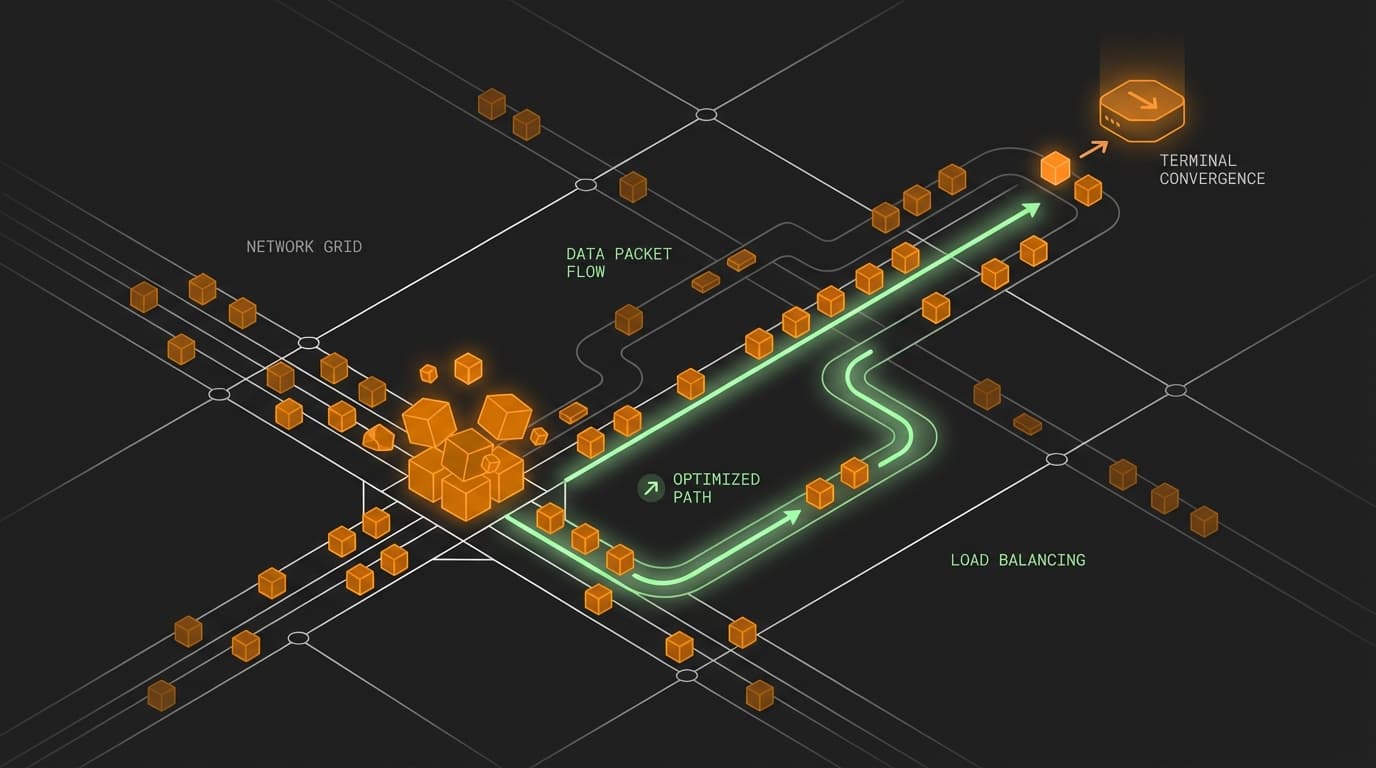

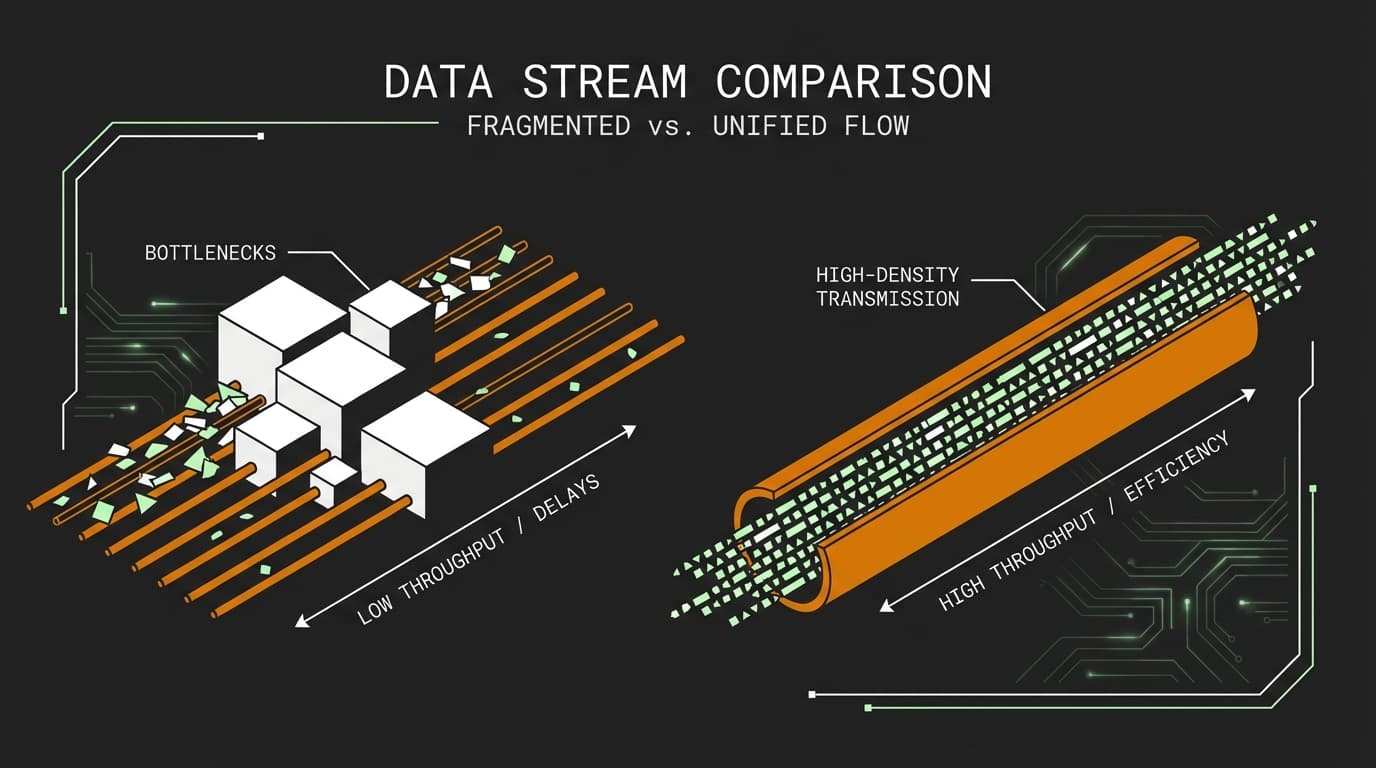

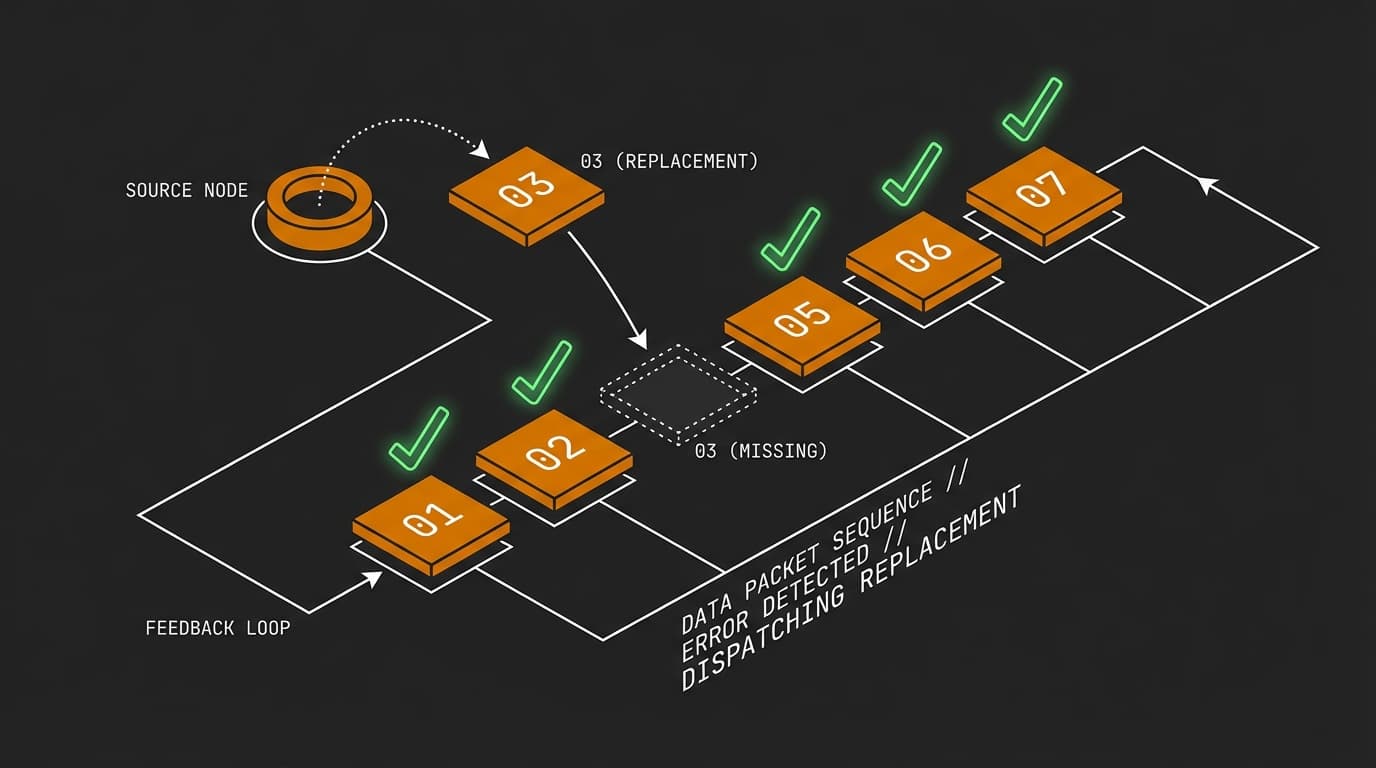

Code typically rots in two places: pull request queues and manual testing environments. These bottlenecks are often invisible to leadership until you start measuring the timestamp of a commit against the timestamp of a successful production deployment.

Imagine a scenario where an engineer fixes a critical bug in a payment gateway. The code is ready in twenty minutes, but it sits in a PR queue for two days. Then, it waits for a weekly manual QA cycle. Finally, it sits in a queue for a Change Advisory Board (CAB) meeting on Friday. By the time it hits production, the bug has already impacted thousands of users. Lead time measurement makes this 'dead time' impossible to ignore.

| Bottleneck | Impact | Optimization |

|---|---|---|

| Stagnant PRs | Days of idle time | Atomic PRs + Review SLAs |

| Manual QA | Linear scaling bottleneck | Automated integration suites |

| CAB Meetings | Fixed-interval delays | Automated deployment gates |

| Large Batches | Increased merge conflict risk | Continuous Integration (CI) |

How does measuring lead time force process optimization?

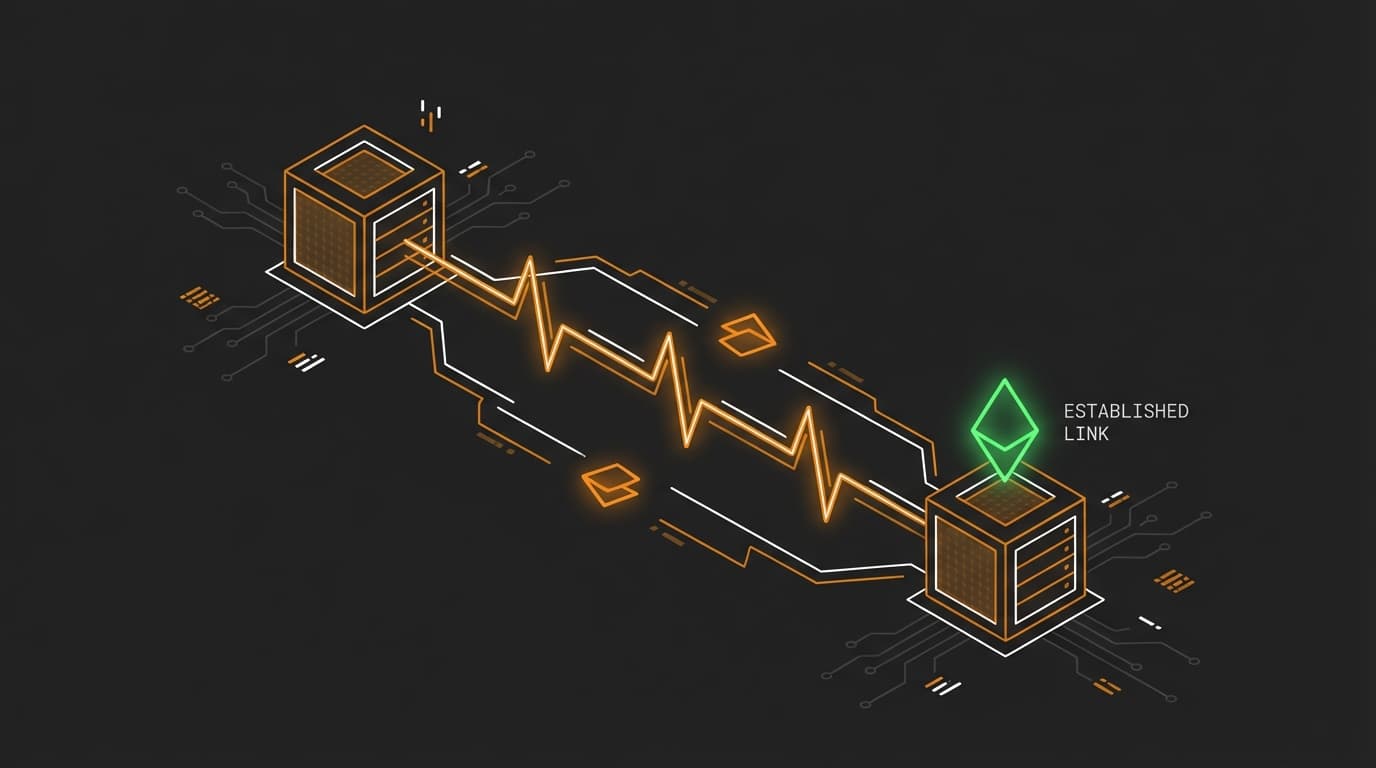

Measuring lead time acts as a catalyst for automation because it highlights exactly where human intervention slows down the machine. To move from weeks to hours, you have to replace manual 'sign-offs' with automated gates and robust CI/CD pipelines.

Instead of waiting for a manual tester to click through a staging site, you invest in a test suite that runs on every push. Instead of a weekly release meeting, you move to a model where a green build is the only approval needed. Here is a conceptual way to track this transition within a deployment script:

// Notify a DORA tracker when a deployment succeeds

const logDeployment = async (commitId) => {

const response = await fetch('https://api.metrics.dev/v1/deployments', {

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: JSON.stringify({ commit: commitId, stage: 'production' })

});

return response.ok;

};

Frequently Asked Questions

Is lead time for changes the same as cycle time?

Cycle time generally refers to the time from starting work to finishing it, while lead time for changes is specific to the 'delivery' phase—starting at the commit. In the DORA framework, we focus on the commit-to-prod window because it specifically measures the efficiency of your delivery pipeline.

Will faster lead times lead to more production outages?

Actually, the opposite is true. Faster lead times require smaller, more frequent updates. Smaller changes are easier to reason about, easier to test, and significantly faster to roll back if a regression occurs.

How do I start measuring this if my process is manual?

Start by logging two timestamps: when a commit is merged to your main branch and when that commit is successfully deployed to production. Even a simple spreadsheet or a basic script querying your CI provider can reveal the 'dead zones' where your code is currently waiting for a human to act.