How Face ID Works: Infrared Lasers & Neural Networks

TL;DR: Face ID does not compare standard selfies to unlock your phone. Instead, it projects 30,000 infrared laser dots onto your face to create a highly precise 3D geometry map. A neural network converts this map into a mathematical feature vector, comparing it to your saved profile to authenticate your identity.

Most people think their phone unlocks by snapping a quick selfie and playing a game of spot-the-difference. I get it—from a user perspective, that’s exactly what it feels like. But under the hood, modern facial authentication relies on an entirely different stack of hardware and software. It’s not looking at your face; it’s reading your face's topography like a surveyor mapping a mountain range. Let's break down the actual engineering happening in the fraction of a second it takes to unlock your screen.

How does Face ID map your face in the dark?

Face ID uses infrared (IR) light instead of the visible light spectrum to detect depth. By projecting 30,000 microscopic infrared laser dots onto your skin, the system can operate flawlessly even in pitch-black conditions. This hardware approach creates a highly accurate 3D topographic map of your features.

Visible light cameras are useless without an external light source. If you’ve ever tried to build a computer vision pipeline using a basic webcam in a dark room, you know the struggle. To bypass this environmental limitation, facial recognition systems use an infrared emitter. Think of it like dropping a massive, invisible net of laser points over your face. As these dots hit your nose, cheekbones, and jawline, they scatter. Because the hardware knows exactly when and where these dots were fired, it can measure how they scatter to map the exact contours of your face down to half a millimeter of distance.

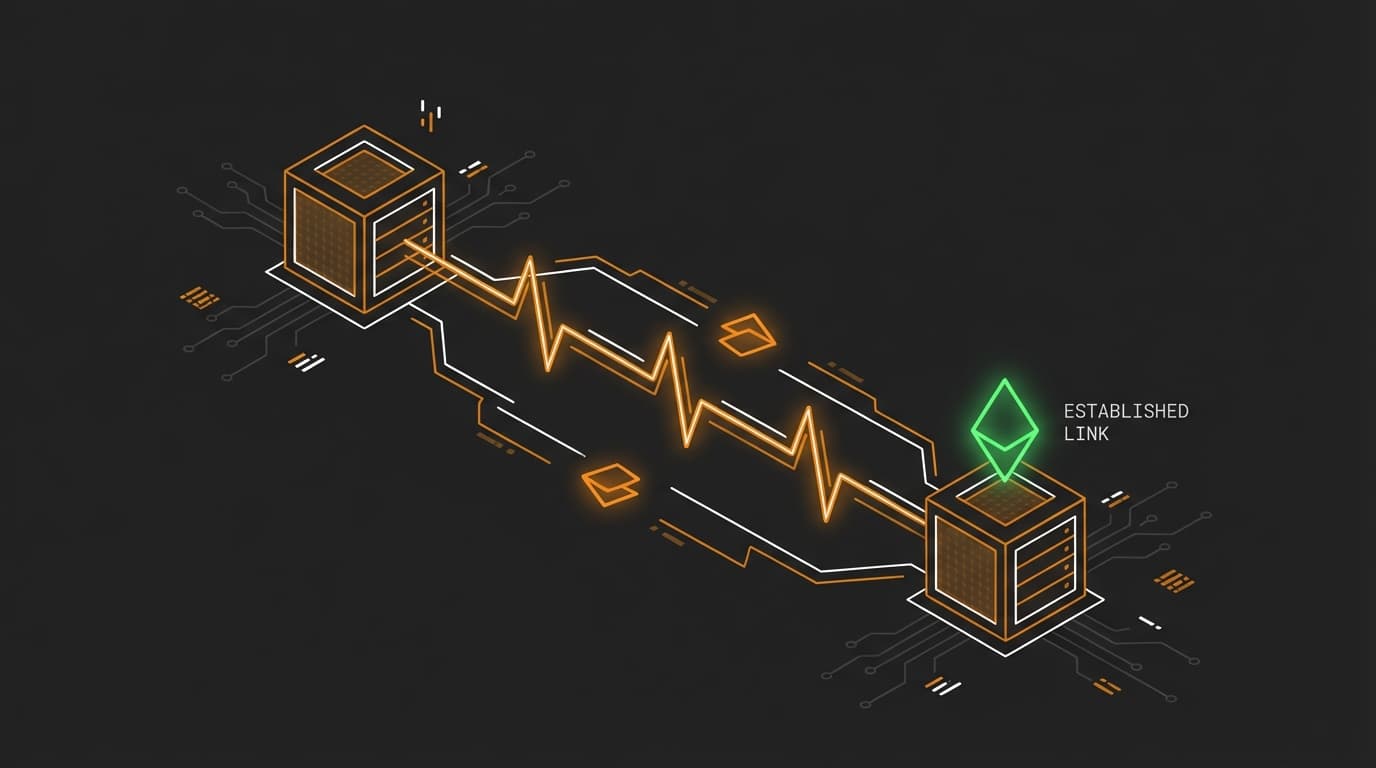

What happens to the scattered infrared data?

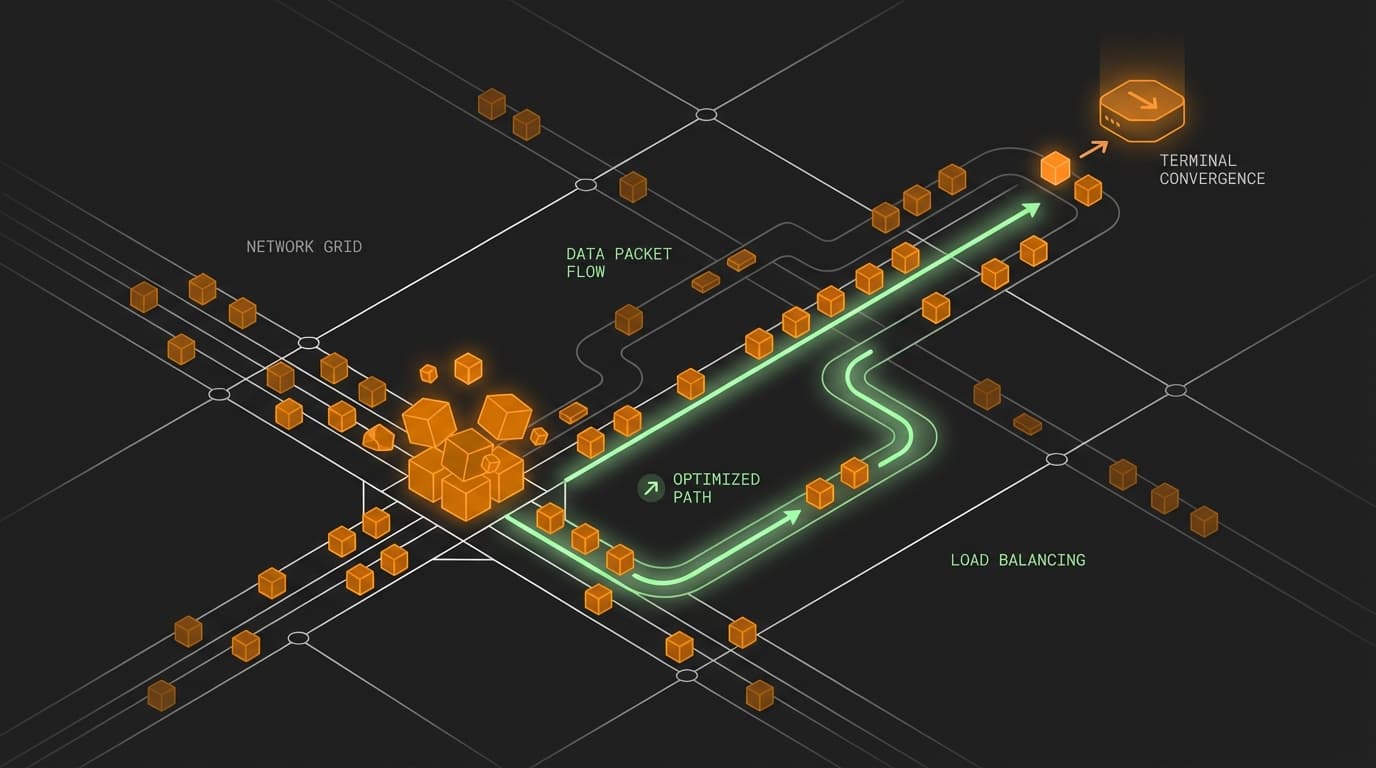

A dedicated infrared sensor captures the scattered light to build a raw 3D geometry map. This physical map is then fed into an onboard neural network to be translated into a mathematical format called a feature vector. This vectorization allows for instantaneous mathematical comparisons rather than heavy spatial rendering.

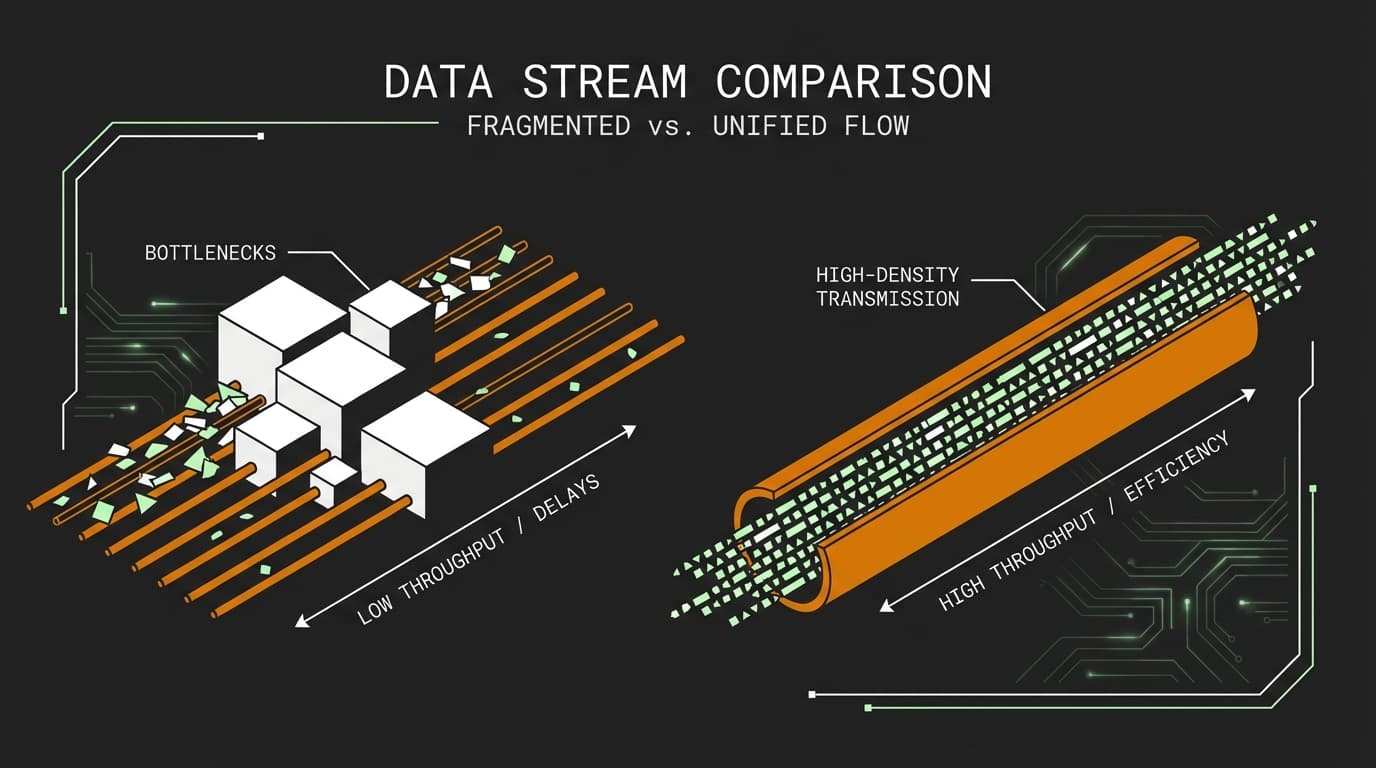

Raw infrared scatter isn't incredibly useful on its own for fast database lookups. Comparing two raw point clouds containing 30,000 spatial coordinates takes significant compute power, and a mobile CPU would choke trying to execute that matrix operation instantly. Instead, the local neural network performs dimensionality reduction. It takes this massive spatial geometry map and compresses it into a highly specific, lightweight array of numbers.

If you imagine a hashing algorithm turning a massive file into a short string, a feature vector is a similar concept, but optimized for physical spatial data. When the device authenticates you, it's essentially running a fast mathematical distance calculation between the live vector and the originally stored vector. If the distance between these two arrays of numbers falls within a strict geometric threshold, the neural network confirms your identity. It is a highly efficient boundary check rather than a heavy pixel-by-pixel image comparison.

What are the steps in the Face ID authentication pipeline?

The pipeline operates as a real-time hardware-to-software relay, transforming physical light into mathematical security tokens. It starts with infrared emission and reflection, moving into depth mapping, and finally executes neural network vectorization to determine a match. Here is exactly how the data flows from the sensor to the CPU during a single authentication request:

- 1. Emission: The dot projector fires 30,000 infrared lasers at the target surface.

- 2. Reflection: The lasers scatter based on the physical depth and contours of the face.

- 3. Capture: An infrared camera reads the distorted dot pattern.

- 4. Mapping: The system constructs a 3D geometry map from the captured depth data.

- 5. Vectorization: A neural network translates the 3D map into a unique feature vector.

- 6. Comparison: The system calculates the mathematical distance between the new vector and the originally saved lock-screen vector to approve or deny access.

Why can't you trick Face ID with a photograph?

A printed photo or a digital screen is a two-dimensional flat plane lacking any physical depth. Because Face ID authenticates based on 3D geometry rather than visual appearance, a flat surface immediately fails the depth check. The resulting depth map simply doesn't match human topography.

Let's say your team is building a fintech app that handles high-value wire transfers. If your internal biometric authentication relied purely on pixel-based visual matching (like a standard webcam feed), a malicious actor could bypass it simply by holding a high-resolution iPad photo of the victim to the lens.

But because Face ID relies on infrared depth mapping, holding up a screen or a printed photo just looks like a perfectly flat sheet of glass or paper to the IR sensor. The neural network expects to see a nose protruding several centimeters and eye sockets recessed into the skull. A flat plane produces a geometry map with zero depth delta. When that flat map is vectorized, the resulting embedding lands miles away from the authorized feature vector in the latent space, and the system instantly denies access.

What else do developers ask about Face ID?

When integrating biometric security, engineers often have secondary questions about privacy limits and hardware edge cases. Understanding how the device stores data and adapts to changes helps clarify system limitations. Here are three common follow-up queries regarding the lifecycle of a facial feature vector.

Does Face ID store photos of my face?

No, the system does not store visible light images or full 3D point clouds of your face. It only retains the mathematical representation, or feature vector, derived from your facial geometry. This vector is securely locked away on the device's hardware enclave and never leaves the phone.

Can Face ID adapt if I wear glasses or grow a beard?

Yes, because it relies on a neural network and feature vectors, the system continuously updates its mathematical model. If a newly generated vector is close enough to authenticate you, the system subtly adjusts the stored baseline vector. This accounts for gradual physical changes over time without requiring a manual reset.

How accurate is the infrared dot projector?

The hardware is exceptionally precise, mapping facial contours with a distance accuracy down to half a millimeter. This ensures that even the most minor topographic depth variations are captured and fed into the neural network. As a result, geometric spoofing of the authentication system is incredibly difficult.