Stop Guessing: Why DORA Metrics are the Only Way to Scale Without Breaking Everything

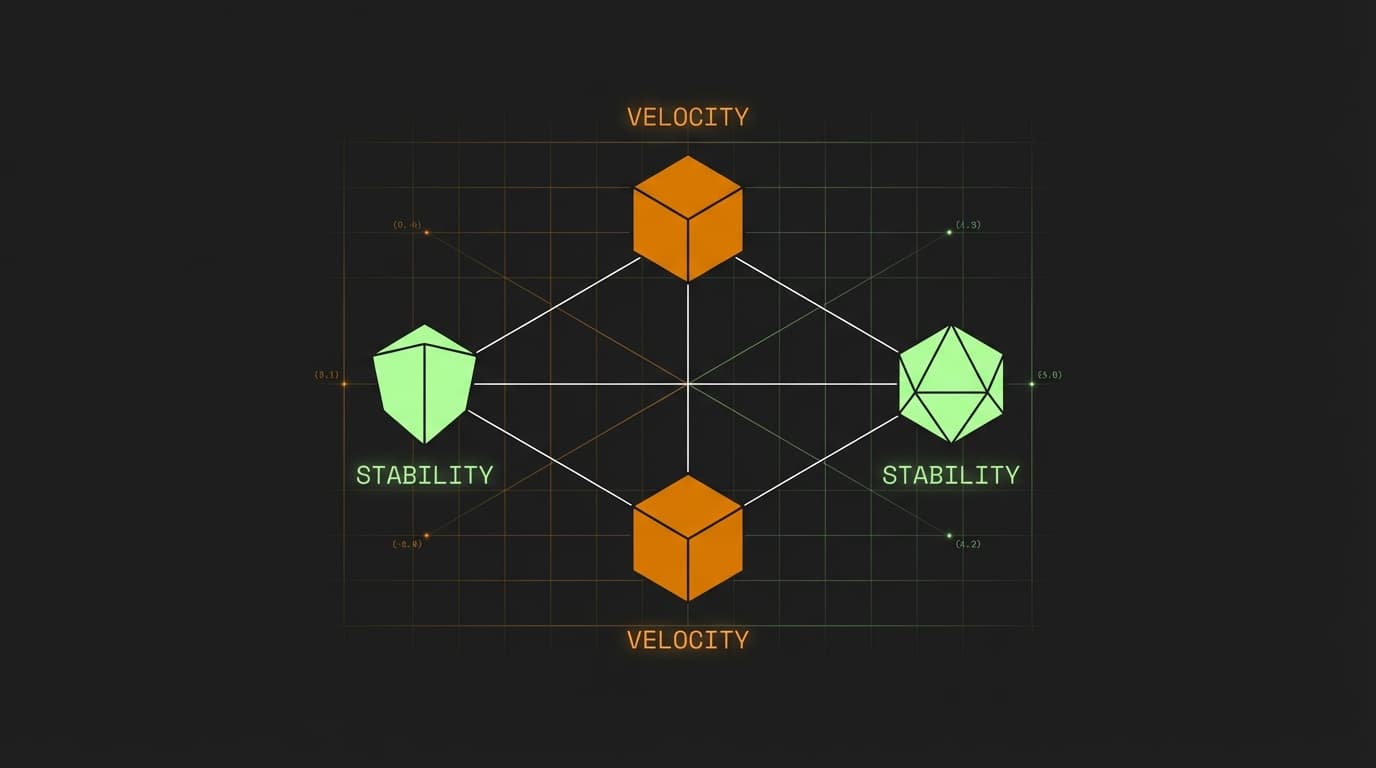

DORA metrics provide an objective framework to measure software delivery performance through four key data points: Deployment Frequency, Lead Time for Changes, Change Failure Rate, and Time to Restore Service. By tracking these, teams can move beyond gut feelings and identify whether they are prioritizing speed at the cost of stability or vice versa.

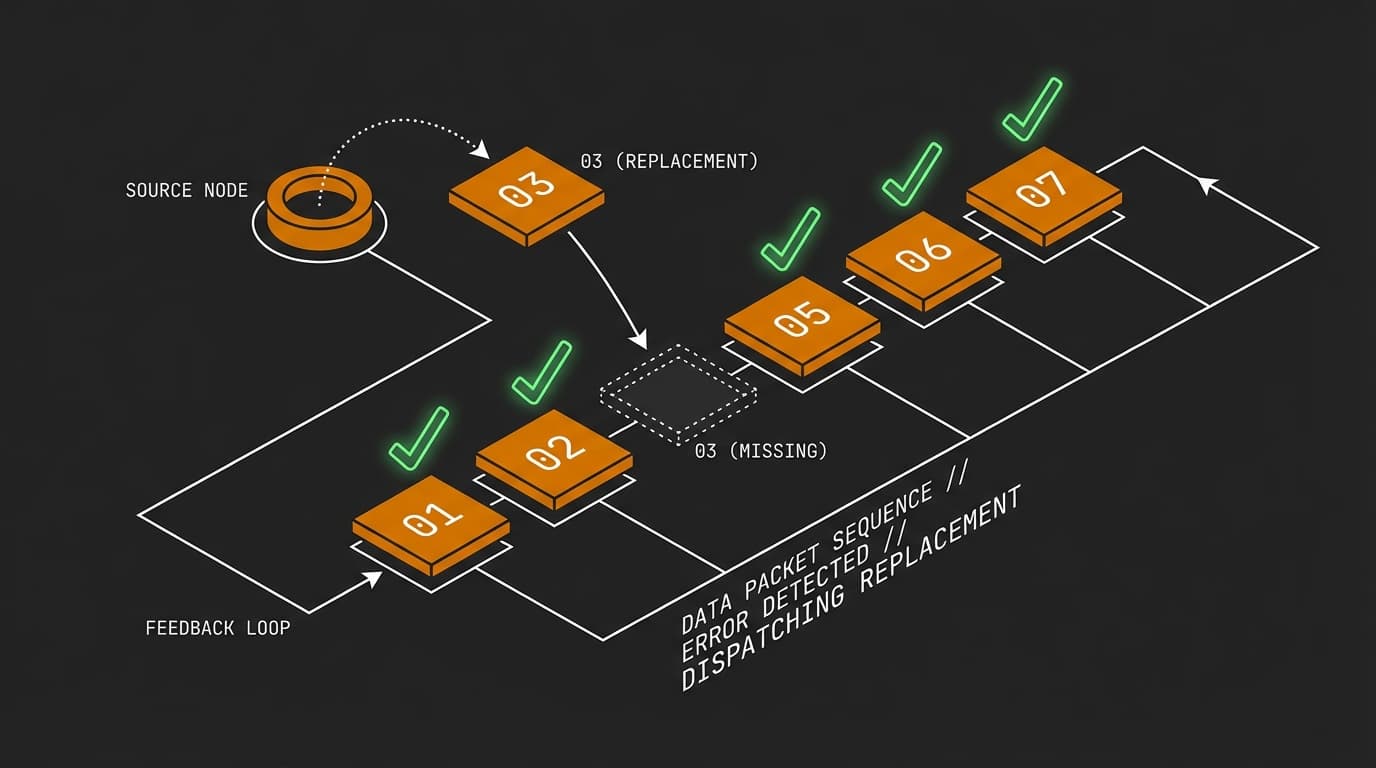

It is 2:00 AM. Your pager is screaming because a rogue microservice is eating memory, and the latest deployment—which took forty minutes to crawl through a brittle CI pipeline—just hit production with a silent failure. In that moment, it does not matter how many JIRA tickets your team closed this week. If your delivery mechanism is broken, you are just spinning your wheels in a high-speed feedback loop of technical debt.

Most teams I encounter are stuck in a guessing game. They feel like they are moving fast, or they assume they are stable because they only ship once a month. But without data, you are likely either flooring the gas while the wheels are coming off or idling in the driveway out of fear. DORA metrics exist to stop the guesswork and show you exactly where your team sits on the spectrum of delivery excellence.

How do I know if my team is actually moving in the right direction?

You determine your team’s direction by measuring the balance between velocity and stability using a standardized set of metrics rather than subjective project timelines. If you can track how often you ship and how often those shipments break, you can identify if you are building a sustainable engine or a ticking time bomb.

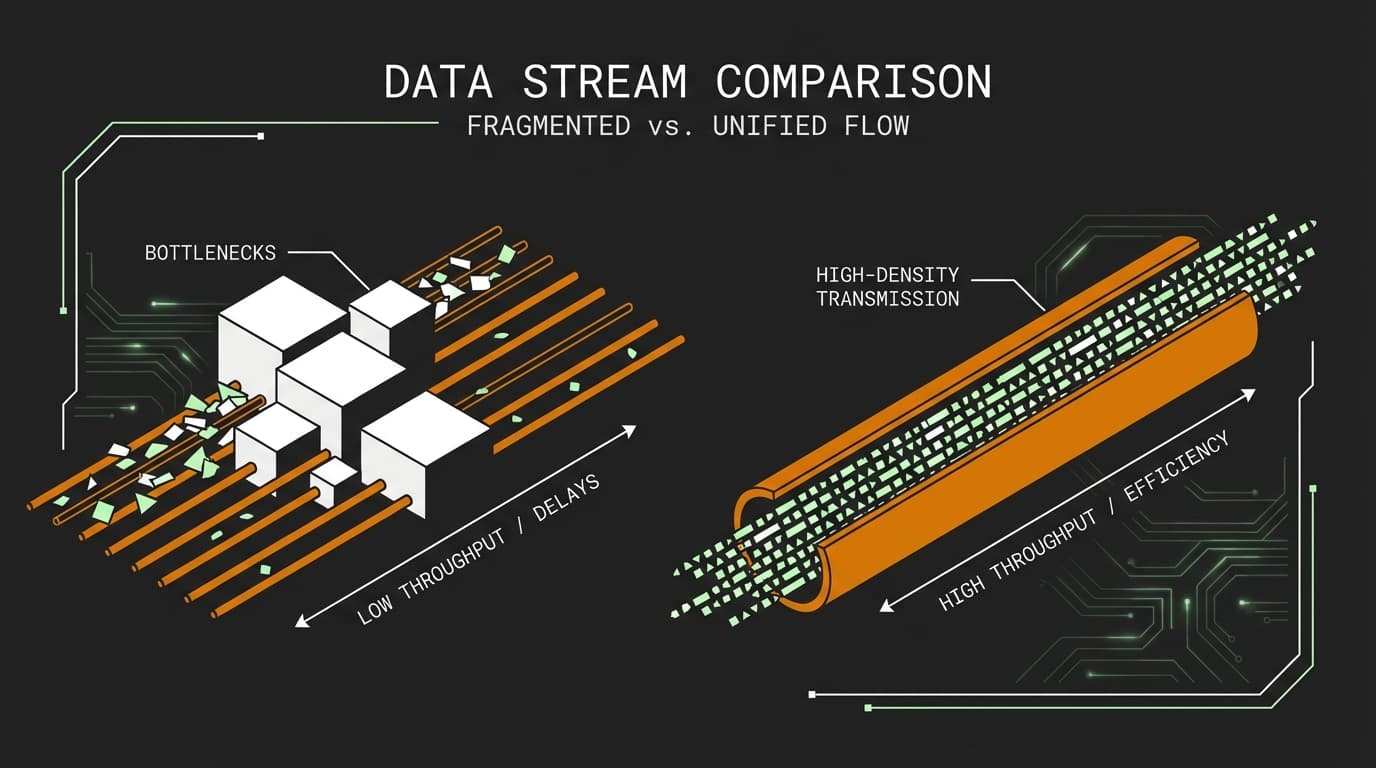

Imagine your team is managing a high-traffic e-commerce platform. You might be pushing code ten times a day, but if half of those pushes require an immediate hotfix, your 'velocity' is an illusion. Conversely, if you have zero bugs but it takes six weeks to change a button color, your 'stability' is killing the business. DORA metrics force you to look at both sides of the coin simultaneously, preventing one from cannibalizing the other.

What are the four DORA metrics and why do they matter?

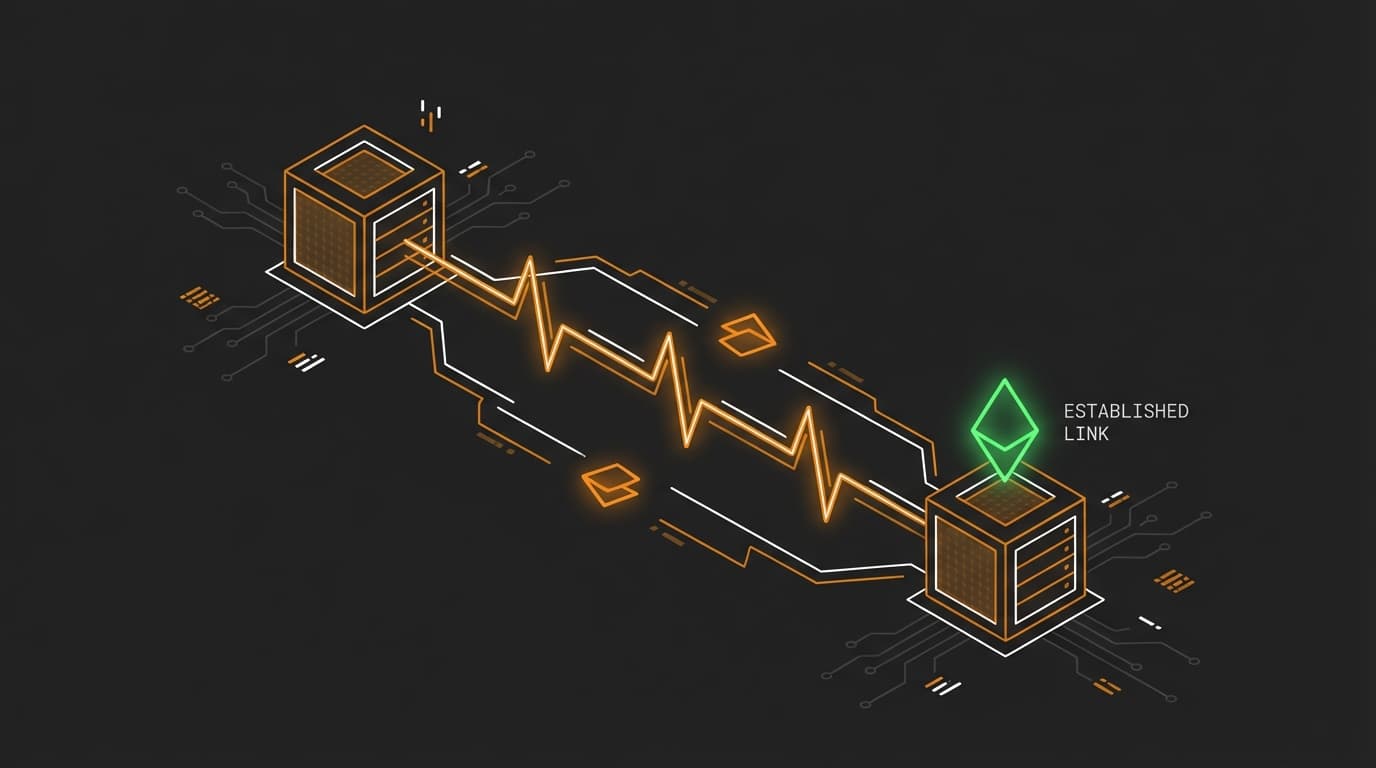

The four DORA metrics are Deployment Frequency, Lead Time for Changes, Change Failure Rate, and Time to Restore Service. These metrics are the industry standard for identifying 'Elite' versus 'Low' performing teams by correlating technical practices with business outcomes.

| Metric | Purpose | Engineering Focus |

|---|---|---|

| Deployment Frequency | Velocity | Small batch sizes and automated release gates. |

| Lead Time for Changes | Velocity | CI pipeline efficiency and code review latency. |

| Change Failure Rate | Stability | Automated testing and environment parity. |

| Time to Restore Service | Stability | Observability, logging, and automated rollbacks. |

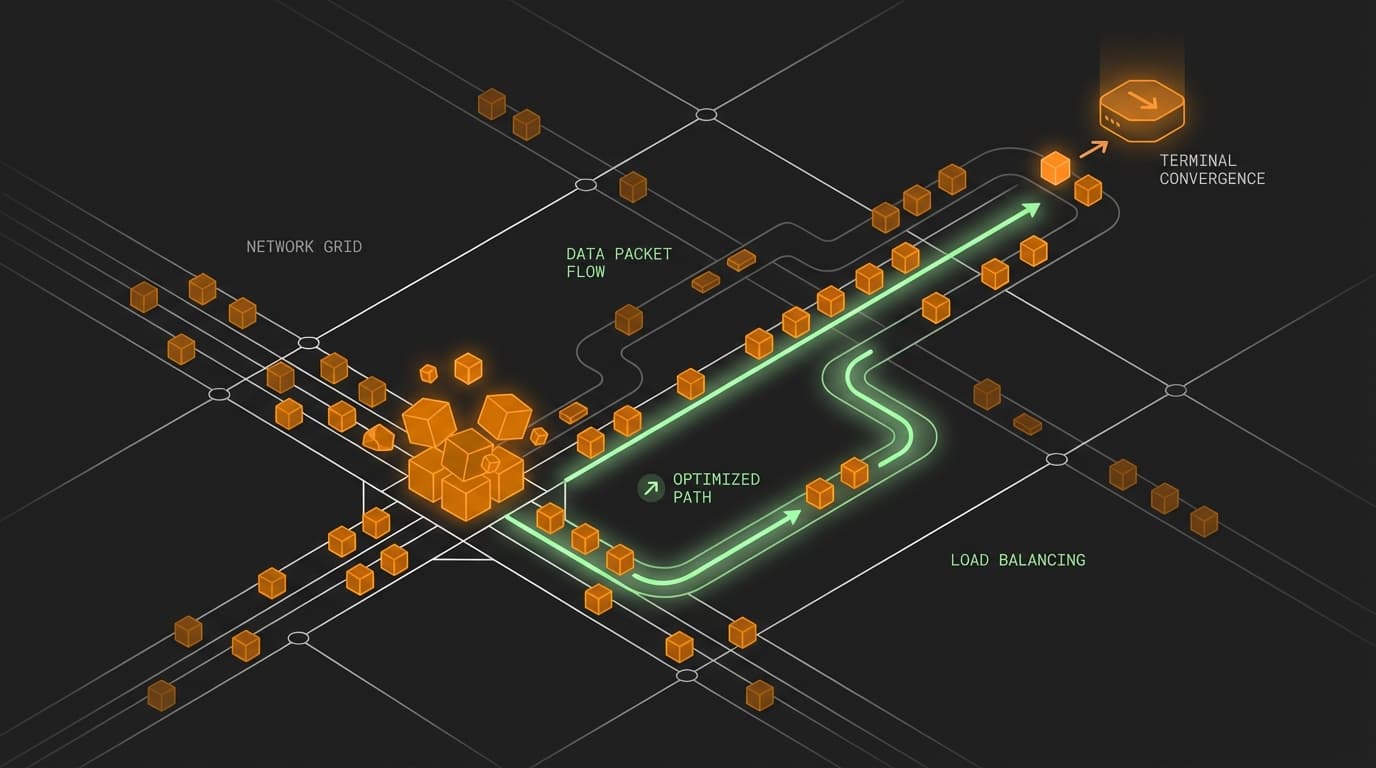

By monitoring these, you can pinpoint the friction. For instance, a high Lead Time for Changes often points to a bottleneck in the manual QA process or a bloated CI suite that engineers have learned to ignore.

How does measuring these metrics change team behavior?

Measuring DORA metrics shifts a team’s culture from 'shipping features' to 'optimizing the delivery pipeline,' which naturally reduces technical debt and improves developer experience. When the data shows that a high Change Failure Rate is tanking your velocity, it provides a data-backed justification to stop feature work and invest in better testing infrastructure.

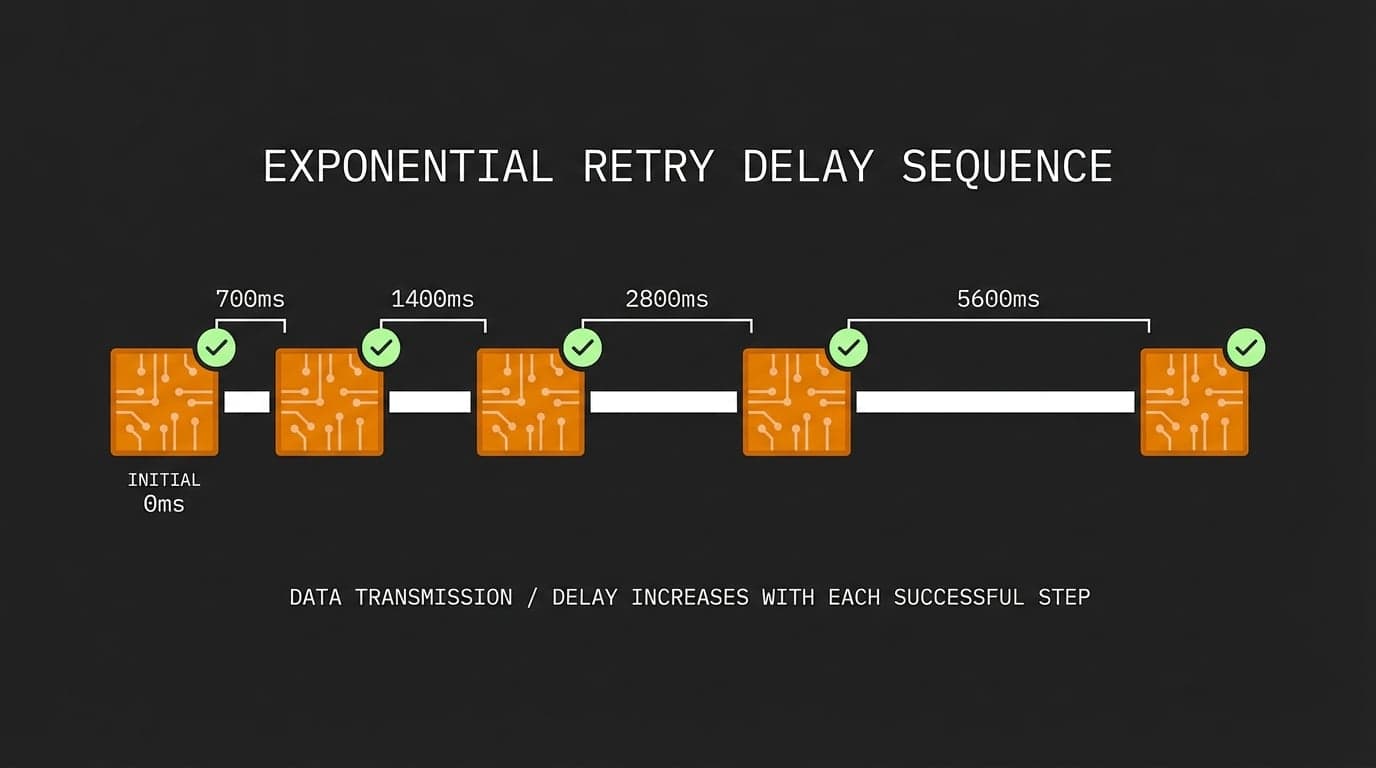

Instead of arguing with product owners about why you need two weeks for refactoring, you can show the trend lines. If the Time to Restore Service has tripled over the last quarter, it is a clear signal that the system has become too complex to manage. The measurement itself acts as a forcing function for better engineering practices, like trunk-based development or improved observability.

Which engineering practices actually move the needle on DORA?

Improving DORA metrics requires a shift toward automated, small-batch delivery and away from long-lived feature branches and manual gates. Practices like trunk-based development directly increase Deployment Frequency, while robust automated testing suites are the primary lever for lowering your Change Failure Rate.

You can even automate the collection of this data to get a real-time view of your MTTR (Mean Time to Recovery) using a simple script to parse your incident management API:

// Conceptual logic for calculating recovery time from an incident API

const incidents = await fetch('/api/v1/incidents?status=resolved').then(r => r.json());

const avgRecoveryMinutes = incidents.reduce((acc, incident) => {

const duration = new Date(incident.resolved_at) - new Date(incident.created_at);

return acc + (duration / 1000 / 60);

}, 0) / incidents.length;

console.log(`Average Time to Restore: ${avgRecoveryMinutes.toFixed(2)} mins`);

When you start seeing these numbers in a dashboard, the team naturally begins to optimize for them. You stop guessing if the new CI tool helped and start seeing the Lead Time for Changes drop in real-time.

FAQ

Is Deployment Frequency the most important DORA metric? No, none of the metrics should be viewed in isolation. If you only track Deployment Frequency, teams might start shipping tiny, meaningless changes just to pump the numbers, which is why you must balance it with Change Failure Rate.

How can a team reduce its Lead Time for Changes? The most effective way to reduce Lead Time is to move toward trunk-based development and minimize the time code spends waiting for review. Reducing the size of Pull Requests is the fastest way to get them through the pipeline.

Do DORA metrics apply to legacy monoliths? Yes, though the numbers will look different than a microservices architecture. Even in a monolith, tracking these metrics helps you identify the specific pain points—like a three-hour build process—that are preventing you from being a high-performing team.

Cheers.